![[*]](/usr/share/latex2html/icons/footnote.png)

Co-evolving Real-Time Strategy Game Players

Chris Miles, Member, IEEE,

and

Sushil J Louis, Member, IEEE

Abstract:

This paper investigates the co-evolution of real-time strategy game

players. First, since existing commercial RTS games are not suitable

for evolutionary computing based research methods, we describe a new

RTS game platform, Lagooncraft, developed for our work. Second, we

formulate the problem of playing a real-time strategy game as solving

a sequence of spatially resolved resource allocation

problems. Influence map trees encode RTS game playing strategies by

taking advantage of this problem formulation. Finally, we describe a

new co-evolutionary method which generates competent RTS game-playing

strategies that routinely beat human opponents in Lagooncraft.

Computer and video games are becoming increasingly integrated into

modern culture. Like checkers and chess before them, video games have

recently started becoming a focus of serious

research [1,2,3,4,5]. Commercial developers of computer players (game AI)

for video games tend to utilize finite state machines, rule-based

systems, and other such knowledge intensive approaches. These

approaches work reasonably well, at least until a human player learns

their habits and weaknesses, but require significant player and

developer resources to create and tune to play competently.

Development of game AI therefore suffers from the knowledge

acquisition bottleneck well known to intelligence researchers and

common to many real world intelligent systems.

Real-Time Strategy (RTS) games are a particularly interesting genre of

modern video games. They focus player involvement around making

long-term strategic decisions, map to many challenging real world

problems, and have yet to be the focus of extensive research. Many

excellent commercial RTS games exist, including Starcraft, Dawn of

War, Supreme Commander, Company of Heroes, and Age of

Empires [6,7,8,9,10]. In these games,

players are put in charge of a military base or colony with buildings,

troops, and money under their control. They play by allocating these

resources: spending money to produce units and construct buildings,

while assigning various tasks to units. Units carry out these orders

automatically, and players win the game by destroying opposing

players' units and buildings. Video games are fundamentally about

making decisions and exercising skills. We are interested in RTS games

that concentrate player involvement around making high level, long

term strategic decisions.

While varying greatly in content and style, a set of game-playing

decisions unites RTS games into a genre. Most of these decisions can

be categorized as either resource allocation problems: how much money

to invest on improving my economy, what kind of troops to field,

or what technological enhancements to research; or as spatial

reasoning problems: which parts of the world should we try to control,

how should we assault this defensive installation, or how do we

outmaneuver our opponent in this battle. By developing players capable

of making these challenging and relevant decisions, we can develop

systems capable of tackling important real world problems.

Specifically, we use genetic algorithms to search for and find good

RTS game-playing strategies. RTS games have, by design, a non-linear

search space of potential strategies, with players making interesting

and complex decisions - many of which have difficult to predict

consequences later in the game. To explore this non-linear search

space we use co-evolutionary genetic algorithms, which have been

historically effective at difficult search problems.

This paper makes two new contributions. First, we define a new RTS

combat estimation system that determines the outcome of in-game

combat. Second, we describe and evaluate a new co-evolutionary genetic

algorithm that co-evolves human-competitive RTS game players that play

the complete game. That is, the co-evolved RTS players manage an

economy, build up a military, and direct this military force to

destroy an opponent's headquarters. Along the way, we provide an

introduction to RTS games and RTS game research, formulate the problem

of generating strategies as a spatially resolved resource allocation

problem, and describe our evolutionary representation for this problem

formulation.

To use evolutionary computing techniques to generate a competent

player for LagoonCraft or other RTS game, we need 1) an evolvable

representation for RTS game playing strategies and 2) a fitness metric

to evaluate competing RTS strategies. The next subsection provides an

overview of our representation. A co-evolutionary algorithm can

determine fitness based on game scores obtained by playing two

strategies against each other. Subsection I-B

overviews our co-evolutionary genetic algorithm.

We developed an Influence Map based Artificial Intelligence player or

IMAI, a general RTS game-player that uses an influence map to

represent partial spatial information in our encoding of RTS strategy.

An influence map (IM) is a grid placed over the world, with values

assigned to each square by some function (IMFunction). Once

calculated, each influence map relates some spatial feature or concept

determined by the IMFunction. Influence maps evolved out of work done

on spatial reasoning within the game of Go and have been used

sporadically since then in various video games [11].

The IMAI plays the game according to a set of parameters which allow

the specification of a wide variety of effective and competent

strategies. To make spatial reasoning decisions we use a tree of

influence maps, Influence Map Trees (IMTrees), to define a novel

spatial reasoning system that provides a representation of complete spatial reasoning strategies. IMTrees are used by the

IMAI to analyze the current game-state to find spatial objectives for

the IMAI to carry out. These objectives tell the player where to

attack, defend, expand and build. Combining objectives from the

spatial reasoning system with non-spatial objectives, the IMAI creates

a directed graph describing all interrelated objectives the player is

working towards. This graph can then be analyzed, using our new

combat estimation algorithm to predict the outcomes of potential

battles, to estimate benefits and assign priorites for each of the

objectives. The IMAI then searches for the resource-objective

allocation that maximizes the benefit acheivable with currently

available resources. This allocation can then be converted to

game-playing commands and used to play the game.

Co-evolutionary Qeueu based GA

The IMTrees representation is used by a co-evolutionary GA which

encodes key IMAI parameters within the individuals of its population,

each of which then represents a distinct game-playing strategy. By

playing individuals in the population against one another, analyzing

the results of those games, and using standard genetic operators to

create new individuals we co-evolve towards increasingly competent

game-playing strategies. Results show that co-evolution produces

innovative, robust, strategies capable of defeating a suite of

hand-coded opponents and humans. These results are based on

LagoonCraft, a real-time strategy game combining game-play elements

from several popular commercial games developed as a test-bed for

research. LagoonCraft follows most RTS game-playing paradigms while

allowing for faster then real-time evaluations across a large

distributed co-evolutionary system.

The next section provides an overview of RTS games and academic

research in RTS games. Section ![[*]](/usr/share/latex2html/icons/crossref.png) describes

LagoonCraft, the RTS game developed to facilitate this research. This

section discusses the units, buildings, and decisions involved in

playing LagoonCraft. More information on LagoonCraft is available at

http://lagoon.cse.unr.edu, including the complete source code.

LagoonCraft was initially described at the Congress on Evolutionary

Computation in 2006 [12]. These two section may serve

as a survey or tutorial for readers new to this genre of video

games. You can skip these sections, if you have experience playing

commercial RTS games and are familiar with research in this area.

describes

LagoonCraft, the RTS game developed to facilitate this research. This

section discusses the units, buildings, and decisions involved in

playing LagoonCraft. More information on LagoonCraft is available at

http://lagoon.cse.unr.edu, including the complete source code.

LagoonCraft was initially described at the Congress on Evolutionary

Computation in 2006 [12]. These two section may serve

as a survey or tutorial for readers new to this genre of video

games. You can skip these sections, if you have experience playing

commercial RTS games and are familiar with research in this area.

In order to better understand the co-evolutionary genetic algorithm,

section ![[*]](/usr/share/latex2html/icons/crossref.png) summarizes the new problem formulation and describes

the architecture of our RTS game-player. This chapter provides a

summary of our approaches to spatial reasoning, resource allocation,

and combat estimation [12,13,14].

summarizes the new problem formulation and describes

the architecture of our RTS game-player. This chapter provides a

summary of our approaches to spatial reasoning, resource allocation,

and combat estimation [12,13,14].

Section ![[*]](/usr/share/latex2html/icons/crossref.png) then describes the co-evolutionary genetic

algorithm and our encoding of RTS strategies, as well as the

architecture and execution model used by the distributed

co-evolutionary algorithm. Results showing the effectiveness of this

new co-evolutionary approach are in section

then describes the co-evolutionary genetic

algorithm and our encoding of RTS strategies, as well as the

architecture and execution model used by the distributed

co-evolutionary algorithm. Results showing the effectiveness of this

new co-evolutionary approach are in section ![[*]](/usr/share/latex2html/icons/crossref.png) . The last

section provides conclusions and directions for future work.

. The last

section provides conclusions and directions for future work.

Real-Time Strategy games (RTS) are a genre of computer and video

games. They take place in real-time and involve resource gathering,

base building, technology development and high-level control over

individual units. Within an RTS game, players become the leader of a

colony or military base. For example, in Supreme Commander (SupCom),

shown in Figure 1, the player controls an

Armored Command Unit (ACU), which is an enormous robot capable of

constructing and controlling an army of other robots via

nano-technology.

Figure 1:

Supreme Commander [8]

|

At the beginning of the game several players are teleported into the

world, where they begin by constructing a base. RTS games have a

strong economic side, with players in SupCom constructing, upgrading

and managing a variety of buildings that produce the two basic

resources - mass and energy. You invest these basic resources

into improving your economy, fortifying your base, strengthening

your military, and developing various forms of technology. SupCom

has hundreds of military units encompassing land, sea and air

theaters. Once constructed, you maneuver your military units

around the world, attacking and engaging enemy units. The ultimate

goal being to destroy the ACU and level the base of any opponents

foolish enough to challenge your supremacy.

Figure 2:

Company of Heroes, another RTS game [9]

|

Underneath the surface game-play, video games are fundamentally about

making decisions and exercising skills. RTS games, while varying

greatly in content and style, are unified by a set of common decisions

that their players make. These decisions involve a variety of

challenging problems that players are simultaneously solving:

developing their economy, training and equipping an effective

military, estimating the location and composition of enemy forces,

predicting incoming attacks or defensive vulnerabilities, while hiding

and misleading their enemies about their own intentions. Real-Time

strategy games present a unique opportunity for research, containing a

variety of interesting research problems within an integrated and

motivated whole. The next subsections detail decisions common to many

RTS games - how they are presented through RTS game-play and how they

relate to real world problems.

Resource allocation decisions involve allocating a limited set of

resources to a set of objectives or tasks; dividing available funds

between economic development and military expansion, distributing

military production amongst various types of units, and deploying

combat forces along several simultaneous fronts. Resource allocation

decisions are extremely common in RTS games, one of the most common

being the ``Guns versus Butter" decision where players make a

trade-off between developing their economy or expanding their

military.

Figure 3:

Production Possibilities Frontier

|

In economics, this problem is a well studied manifestation of a

production possibilities frontier. A production possibilities

frontier exists when a producer has to compromise between two goods to

produce. In Figure 3, for example, the producer is

compromising between producing tanks and farms. Producing more tanks

comes at a cost of decreased farm production. In RTS games players

compromise between reinvesting in their economy and expanding their

military. This is a highly complex problem in both the real and

virtual worlds. The player who maintains a stronger economy almost

always wins in the long-term, but decisive strikes by a player with a

military advantage can devastate an opponent's economy.

Spatial reasoning problems involve decisions made about the spatial

shape of the battlefield: where do I attack, where do I defend, which

parts of the world should I try to control, how should I assault this

defensive installation, how do I outmaneuver my opponent. These

fundamentally difficult decisions form a significant part of RTS

game-playing strategy and relate to many other problems in computer

science. To help make spatial reasoning decisions within the realm of

RTS games we developed Influence Map Trees (IMTrees) discussed in

Section VI-B5.

Opponent Modeling problems arise in RTS games because RTS players play

with an incomplete picture of the game-state. In contrast to games

like chess, and in common with games like poker, RTS games do not

present their players with complete knowledge of the game-state.

Figure 4:

Warcraft 2 - Fog of War [15]

|

Players can only see enemy units within the vicinity of their own

troops, the rest of the world is covered in a ``fog of war.''

Figure 4 is a screen-shot from Warcraft 2 showing

the traditional three levels of information. Inside the black area in

the upper left, no information is available. If a friendly unit

passes through the vicinity of that area, then it will be promoted to

the next level of information which is shown as the gray layer.

Inside this level the terrain has been revealed but enemy units are

concealed. The lighter areas, around the shipyard and the lumber-mill

are currently visible, with any enemy units in those areas revealed.

The fog of war obscures enemy units or buildings within it, allowing

players to deceive and mislead one another. Developing systems

capable of deceiving, misleading, and anticipating one another has

been the subject of significant research interest, particularly within

the game of poker [16,17,18,19,20,21,22,23,24,25,26].

Force Composition

Force composition decisions involve players determining how to

allocate their military budget amongst the various types of troops

they can build. For example, suppose a player in SupCom is planning

an attack against an opponent early in the game. There are four

different tier-one ground units available, determining how many of

each to bring to the attack is a force composition decision. Players

win battles in RTS games by bringing a superior mix of units to the

fight. Force composition is less about numbers and more about counter

units and cohesive strategies. RTS games are designed to not have

dominating strategies, they enforce this by having units follow a

paper-rock-scissors model. No single type of unit can defeat all

other types of units, each has its own strengths and weaknesses

against the various types of adversaries.

Figure 5:

Paper Rock Scissors Dominance - Archers Dominate Infantry, Which Dominates Cavalry, Which Dominates Archers

|

In a medieval combat game for instance a player might have three

units: archers, infantry, and cavalry. In this game archers defeat

infantry, cavalry defeat archers, and infantry defeat cavalry. This

leads to a paper-rock-scissors model as shown in

Figure 5.

Well made games do this in interesting ways: infantry dominate archers

or cavalry in close combat, but archers quickly reduce them from range

before they can engage, while cavalry can move quickly enough to avoid

significant losses to the ranged attacks of archers. Force

composition decisions quickly become intertwined with spatial

reasoning decisions. Placing infantry in front of archers, for

example, protects them from opposing cavalry, forcing opponents into

difficult compromises. A modern commercial RTS game like Medieval

Total War 2 might have hundreds of types of units organized into

dozens of categories with many complex interactions, all of which

provide opportunities for players to utilize. Determining the proper

composition of units is a complex and challenging problem, I approach

this problem through an objective chaining and combat estimation

system described in Sections ![[*]](/usr/share/latex2html/icons/crossref.png) and

and

![[*]](/usr/share/latex2html/icons/crossref.png) respectively.

respectively.

There has been some research in developing RTS game-players. Before

describing our approach, the next section introduces related work

within the realms of academic and industrial AI.

Background and Related Work

This section assumes that the reader knows genetic algorithms and thus

goes directly to describing co-evolution, and the co-evolutionary

techniques used heavily in this works [27]. It then

explores previous work in RTS games, including traditional game AI

research and commercial AI techniques.

A canonical genetic algorithm evaluates individuals in isolation,

relying on an objective fitness function to measure an individuals

utility. Many problems exist, particularly within games, where it is

difficult to judge the quality of an individual without comparing it

against other individuals. If Bob the chess player asks you how good

of a player he is, it is difficult to assign him a rating without

having him play a game against an opponent. Co-evolutionary Genetic

Algorithms are GA's where the fitness of an individual is evaluated

with respect to other members of the population. In the past

co-evolution has been successful in games such as chess and

checkers [2] and should be equally applicable within

the realm of RTS games.

Often it is advantageous for the population to contain a set of

individuals representing a variety of good solutions, instead of

converging on the single best solution. Most interesting games

exhibit paper-rock-scissors dynamics, where no single strategy

dominates all other strategies. Consider the case where Strategy A

defeats strategies B through J, while strategy K defeats only

strategies A and D. While strategy A would be considered the best

single strategy, defeating virtually all opponents, it still has a

valid counter strategy and the final population should contain

individuals A, K, and the best counter strategy for K. A traditional

GA would assign higher fitness to strategy A, and the population would

eventually converge on that strategy. To prevent this, several

techniques have been developed to help maintain diversity. I next

describe two such niching techniques.

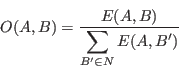

Fitness sharing is the best known and most widely used niching

technique. It was originally introduced by Holland [27]

and improved by Goldberg and Richardson [28].

Fitness sharing modifies the search landscape by reducing the payoff

in densely populated regions, lowering each individual's fitness

proportional to the number of similar individuals in the population.

Generally the fitness is divided by the niche count, determined by a

problem specific ``niche" function which determines the number of

similar individuals in the population.

|

(1) |

In our example, suppose  copies of strategy

copies of strategy  exist in the

population,

exist in the

population,  copies of strategy

copies of strategy  exists, and

exists, and  copies of

strategy

copies of

strategy  exist. Each individual receives fitness equal to the

number of other strategies they defeat, divided by the niche count.

Strategy

exist. Each individual receives fitness equal to the

number of other strategies they defeat, divided by the niche count.

Strategy  individuals receive

individuals receive  fitness, strategy E receives

fitness, strategy E receives

and strategy K receives

and strategy K receives  . Strategy

. Strategy  has the highest

fitness, and will likely gain a larger share of the population in the

next generation. The system should converge on a ratio of

has the highest

fitness, and will likely gain a larger share of the population in the

next generation. The system should converge on a ratio of

population A,

population A,

population E,

and

population E,

and

population K. Fitness sharing is effective,

but creating the fitness niching function can be difficult as it

requires a measure of similarity between players. We use a form of

fitness sharing developed in section

population K. Fitness sharing is effective,

but creating the fitness niching function can be difficult as it

requires a measure of similarity between players. We use a form of

fitness sharing developed in section ![[*]](/usr/share/latex2html/icons/crossref.png) to maintain

niching within our population.

to maintain

niching within our population.

An alternative scheme is DeJong's crowding [29], where only a

small portion of the population reproduces every generation, and

offspring replace the most similar individual in a random sample of

the population. This makes it difficult for a single strategy to

dominate the population since children of good individuals tend to

replace each other instead of replacing different strategies.

Crowding does not tend to develop the kind of stable populations that

fitness sharing does, and it relies upon a ``crowding factor"

coefficient that is difficult to set. I do not use crowding in this

work.

A large body of work exists in which evolutionary methods have been

applied to

games [2,30,4,31,3].

However the majority of this work has been applied to board, card, and

other well defined games. Such games have many differences from

popular real time strategy (RTS) games such as Starcraft, Total

Annihilation, Homeworld or Dawn of

War [6,32,33,7]. Many

traditional board, card and papers games use entities or pieces that

have a limited space of positions (such as on a board) and restricted

sets of actions (well defined movement). Players in these games have

well defined roles, and the information available to players is well

defined. These characteristics make the game state easier to specify

and analyze. In contrast, entities in RTS games exist and interact

over time in continuous three dimensional space. Players do not

directly control entities, but instead provide them with higher level

goals that lower level AI controllers work to accomplish. For

example, players do not tell their units exactly how to maneuver or

which specific enemy unit to fire upon with a specific weapon.

Instead players give high level commands such as move to this area,

fighting everyone you see. This adds a level of abstraction not found

in traditional games. These issues push RTS games into a category

separate from traditional board games, requiring new algorithms and

techniques. John Laird [34,35,36]

surveys the state of research in using AI techniques in interactive

computer games, describing the importance of such research while

providing a taxonomy of games and their related problems.

Apart from minimax and its variations, several recent research

projects have attacked the development of computer and video game

players. Soar is a symbolic cognitive architecture, created by John

Laird, Allen Newell, and Paul Rosenbloom at CMU [37]. Soar has

been applied to two commercial games, Quake 2 and Descent 3, both of

which are first-person shooters. While these kinds of games and their

associated decisions differ significantly from RTS games, there are

elements of how Soar uses long-term objectives subdivided into more

immediate tasks that resemble our objective chaining system.

Orts, a real-time strategy game engine developed at the University of

Alberta by Michael Buro and his students, was developed in parallel

with this work and has similar goals [38].

Figure 6:

Orts Screen-shot

|

The primary Orts game, shown in Figure 6 is built

around the game-playing mechanics used in Starcraft. While several

players have been developed using Orts, the work has primarily focused

on lower level tactical scenarios, using traditional techniques for strategic play.

Commercial Real-Time Strategy Game AI

RTS games have game-states that cannot be easily enumerated - units

exist in continuous space and time, and both players act

simultaneously. Because of this many traditionally successful AI

techniques such as Minimax cannot be easily applied. Most commercial

RTS games use traditional AI approaches such as decision trees, expert

systems, and scripted systems. These systems generally follow an

``attack in waves" approach: building up troops for a few minutes,

with developers scripting which troops to build; sending those troops

off to attack and die, repeating with the next wave until the game is

over. Simple systems handle the rest of the AI player's decisions,

managing the economy according to pre-programmed rules and using

simple algorithms to determine base layout. For example,

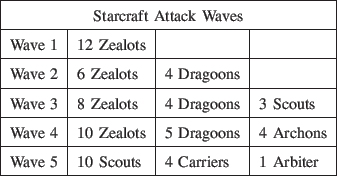

Starcraft [6], the best selling RTS game and second best

selling PC game so far in history, uses the attack in waves system

described by the following table.

While this may provide a challenging opponent a handful of times,

competent players quickly learn dominating counter strategies that

allow them to easily defeat computer opponents virtually 100% of the

time. This is boring.

Developers use these kinds of systems because they are a low risk way

to provide specific experiences to players. Developers can be

confident that at time T in the game their AI will perform action A,

leading player into situation B at which point players can overcome

seemingly insurmountable odds by making decision C. While this works

fine for long scripted campaigns, in shorter skirmish matches it

quickly becomes an exercise in seeing the AI do the same actions time

and time again. These systems have a significant cost in expert

knowledge as well, requiring extensive tuning and testing to produce

even moderately challenging players. Even the best scripted systems

are inherently predictable and fragile, with human players quickly

learning dominant counter strategies. To counter this, virtually all

commercial RTS AI systems cheat to provide a more interesting

experience for competent human players - providing the AI with a

complete map of the world as well as additional resources. While more

modern AIs have made strides to overcome these criticisms, generally

by employing subtler cheats, RTS AI systems have yet to approach the

realm of human-level competence without cheating.

As a testbed for this kind of research we next describe LagoonCraft, a

purpose built RTS designed for serious intelligence research, not

entertainment.

LagoonCraft

One of us, Chris Miles, developed LagoonCraft, a real-time 3D naval

combat strategy game, as a platform for this research. While there

are many excellent RTS games, they are developed for entertainment and

are not an optimal environment for research. LagoonCraft has

game-play similar to other RTS games: players gather resources,

construct buildings, create units, and then maneuver those units to

conquer the world. LagoonCraft has two types of resources: oil,

produced by capturing and holding resource points; and power,

generated by constructing power generators. The two fundamentally

different modes of generating resources requires players to compromise

between fighting to expand and control as many points as possible to

maximize oil income while reinvesting as much as possible into power

generators to maximize power income. Figure 7 shows

a screen-shot from LagoonCraft where the blue player is attacking from

the top right, pushing a heavy destroyer through red's lines and

flanking around the left side of the screen. The red player struggles

to maintain a defensive line, pulling back larger ships to engage the

destroyer while smaller boats engage blue's second attack wave.

Figure 8:

LagoonCraft Unit Dominance Graph

|

LagoonCraft has a variety of units organized into three tiers: tier

one units are squads of small boats armed with light weapons, tier two

units are medium sized frigates, and tier three contains heavy

destroyers and cruisers. Units have a complex paper-rock-scissors

dominance graph among them, shown in Figure 8.

Unit to unit dominance is primarily enforced by weapon and armor

characteristics: the missiles fired by a hellfire frigate have

significantly more penetration then the heavy machine guns on an

assault frigate, making them more effective against larger units,

however the missiles are not accurate enough to effectively engage

smaller boats, leading to paper-rock-scissors subgraphs like the one

between the two frigates and the RPG boat in

Figure 8.

Players must carefully choose which types they construct, trying to

bring the mix of units that best counters their opponent's forces.

Higher tier units provide more firepower for their cost in oil, but

are more expensive in terms of power. This allows players to choose

divergent economic strategies: concentrating on capturing oil points

to produce lots of lower tier units, or reinvesting in power

generators to produce higher tier units.

LagoonCraft has a selection of buildings that players can construct shown in Figure 9.

Figure 9:

Buildings in Lagoon

|

Players start the game with a headquarters that constructs scouts and

generates a modest oil income. More headquarters can be built, and

while prohibitively expensive, you lose if all of your headquarters

are destroyed. Resources are generated by oil platforms, which are

captured but cannot be constructed, and power generators which are

built normally. There are three factory buildings that construct

units: a dock which constructs tier one units, a harbor which

constructs tier two units, and a shipyard which constructs tier three

units. The higher tier factories are bigger, more expensive, and more

difficult to destroy.

Figure 10:

Squads of Boats in LagoonCraft Moving In Formation

|

Instead of having players control every action of every one of their

units and buildings, LagoonCraft, like all RTS games, uses a

hierarchical AI system to distribute the work. At the highest level,

players (human or CI) directly make broad decisions which are passed

down to the lower level AI's. The middle level AI system deals with

timing and coordination, breaking long term tasks into smaller ones

that can be handled by the unit AI. At the lowest level, the unit AI

controls individual units to achieve short term tasks via immediate

actions like maneuvering to avoid objects, staying in formation, and

maintaining proper firing positions. Since there is no computational

intelligence involved in the middle and unit AI levels we do not

discuss them futher in this paper.

LagoonCraft is a substantial project, with around 300,000 lines of c++

code and nearly 50,000 lines of python code. Once developed it has

provided an excellent testbed for research in developing RTS

players. More information on LagoonCraft can be found at

http://lagoon.cse.unr.edu. It has been used for a variety of other

projects, both at UNR and within the greater community.

The LagoonCraft research testbed allowed the investigation of a new

approach to play RTS games. This new approach is detailed in the next

chapter.

Developing an RTS Game-Player

We seek to design an AI that can make RTS game-playing decisions.

Playing LagoonCraft requires algorithms that can make the variety of

game-playing decisions presented by the game, including spatial

reasoning, resource allocation and opponent modeling. The goals for

the IMAI are to develop a player that plays complex and robust

strategies while containing those strategies within a representation

amiable to evolution. The IMAI works on the abstract level by casting

the play of the game as a resource allocation problem. IMAI players

run in the continuous loop shown in Figure 11.

Figure 11:

IMAI Main Loop

|

Each iteration has three major phases: resource identification,

objective creation, and resource allocation. In the resource

identification phase, our system analyzes the current game-state

determining which resources (money, buildings, units) are available

for the player to allocate. In the objective creation phase the IMAI

outlines objectives to achieve. Spatial objectives are first created

through a spatial reasoning system as described in

Subsection VI-B. The IMAI then creates

non-spatial objectives including creating units, constructing

buildings, and determining the importance of gathering resources. The

relationships between objectives form a directed graph which

propagates benefit from objectives to their prerequisites as described

in Subsection VI-D.

This produces a set of objectives with associated benefits. The IMAI

then uses an allocation system to search for good allocations that

maximize the benefit realizable with currently available resources as

described in Subsection VI-E. Finally, the

IMAI converts this allocation into the actual commands used to play

the game.

Identifying Resources

We define a resource as something players utilize to achieve

objectives. The IMAI currently identifies the following types of

resources:

- Basic Resources: Oil, Power

- Builders: Capable of constructing units

- Units: Capable of moving, fighting and capturing

- Build Points: Places buildings can be constructed

Identifying resources requires the AI to parse the game-state and

determine the relevant information. Most of this information is

readily provided by the game. The final and most complicated category

of resources, build points are unique in that they represent locations

on the map to construct buildings, something that requires a spatial

reasoning decision to determine. To identify these locations We use a

simplification of the spatial reasoning system used to create

objectives. We develop this general spatial reasoning system in the

next section.

Spatial Reasoning

The spatial reasoning system analyzes the game-state in order to

produce a set of spatial objectives for the IMAI to carry out, see

Figure 12. Since spatial reasoning

decisions form much of the core RTS game-play, the IMAI represents its

spatial reasoning strategies in a way amenable to evolution. While

spatial reasoning is an area of significant research in computer

science and powerful techniques such as qualitative constraint

calculi [39] exist, We develop a novel spatial reasoning

system - IMTrees - to represent spatial reasoning decisions in an

evolvable way.

Figure 12:

Spatial Reasoning Pipeline

|

An enormous variety of spatial reasoning strategies exist in RTS games

- even among seemingly simple decisions like where to place basic

buildings complex strategies emerge. For example, Starcraft players

can use basic supply depots to funnel enemy melee units into well

defended kill zones. Representing these strategies in a generic and

expressive way while maintaining enough focus to be evolvable is one

of the cornerstone problems tackled in this work. Inspired by a wide

variety of sources, We use a tree structured representation forming

simple building blocks into a larger structure to contain complex

ideas within a simple representation. Specifically, we use Influence Maps (IMs) as

a basic spatial reasoning block to contain a simple spatial concept

such as "away from enemy factories". By forming these blocks into an

Influence Map Tree or IMTree We develop a representation capable of

containing complex spatial reasoning strategies. IMTrees have

similarities to Genetic Programming, an outgrowth of genetic

algorithms that evolves tree structured programming elements to solve

a variety of problems [40]. While IMTree based spatial

reasoning has significant differences, the successes and failures of

genetic programming highlight many of the strengths and weakness

involved in evolving tree structured representations. In the next two

sections We describe influence maps and influence map trees, the

foundations of my spatial reasoning system.

An influence map (IM) is a grid placed over the world, with values assigned to each square by some function (IMFunction).

Once calculated, each influence map relates some spatial feature or concept determined by the IMFunction.

Influence maps evolved out of work done on spatial reasoning within the game of Go and have been used sporadically since then in various video games [11].

Figure 13 is a visualization of an influence map, with the IMFunction being the number of triangles within some radius.

If each triangle was a resource location, and the radius of the circle was the effective resource gathering distance, this IM could be used to find optimal resource gathering locations for any situation.

Figure 13:

Influence Map Displayed as Numbers

|

Influence maps in video games developed to meet the needs of turn based war-games, predecessors of real-time Strategy games, that placed a high priority on competent AI.

Influence maps are still used in a variety of games, especially within the RTS genre.

For example, Age of Empires [10] uses influence maps to determine building locations (as part of its attack in waves system) using an IMFunction based on nearby related resources.

For example, to construct a granary, which stores food, an IM summing up the number of food producing entities within the effective radius of that building is created.

By finding locations in the IM with high values, the AI can determine good locations to place its buildings.

Figure 14:

Influence Maps for Go, see GoBlob [41]

|

Influence maps can be rendered in a graphical format that humans can read fairly easily - Figure 14 is displaying various characteristics of a Go board [41].

Because of their readability influence maps can be developed, tested and debugged in ways not possible with other spatial reasoning approaches such as neural networks.

IMs have traditionally been hand-coded to solve particular problems, with a set of IMs combined through a weighted sum to produce solutions to more complicated problems.

In contrast, We use a tree structure to allow for a more expressive and general representation.

Influence Map Specifics

We use one fundamental type of influence map, the ``near" IM, which has values based on the vicinity or distance from a type of unit or building.

To completely define an IM we need to specify a number of parameters and coefficients, listed below:

- Relevant side encoded as a two bit binary integer, is one of the following:

- Friendly units

- Enemy units

- Neutral units

- Any side

- Relevant Entity Category encoded as a two bit binary integer, is one of the following

- Units

- Buildings

- Resource points

- Effective Radius encoded as a two bit binary integer, is one of the following:

meters

meters

- Effective weapon range of relevant units

meters

meters  Unit Size

Unit Size

- How values are distributed within the circle encoded as a two bit binary integer specifying one of the following types of distributions:

- Highest values near the unit, linearly fall off towards zero at the radius

- Highest value along the circumference, linearly falling off towards the unit

- Consistent values throughout the circle

- Effect only the single closest square

- What values to assign encoded as a two bit binary integer specifying one of the following values or sources:

- One

- Value of relevant entities

- Power of relevant entities, where power is an abstract value assigned to the entity denoting tier

-

of relevant entities

of relevant entities

- Radius Coefficient which is multiplied by the effective radius and ranges between

and

and

- Value Coefficient which is multiplied by the value within each square of the IM, and ranges between

and

and

For example, in Figure 15 there is a single friendly headquarters, which is adding value to the squares surrounding it based on the value of the headquarters.

Values linearly fall off as you move away from the headquarters up to a maximum radius of  meters multiplied by a radius coefficient of approximately 1.3.

meters multiplied by a radius coefficient of approximately 1.3.

Figure 15:

Near Friendly Buildings

|

Influence Map Trees

We contain IMs within a tree structure instead of the traditional weighted list [11] to allow increased complexity while retaining the same basic components.

Each tree represents a complete decision making strategy in a form that can be encoded and evolved by a genetic algorithm.

Figure 16:

Influence Map Tree

|

Leaf nodes in the tree are regular IMs, using an IMFunction to generate their values based on the game-state.

Branch nodes perform operations on their children's values in order to create their own values.

These operations include simple arithmetic operators to form new values, such as combining their children's values in a weighted sum or multiplication to form new values.

These nodes also perform processing on their children, smoothing or normalizing their values.

Many game AI developers use specialized post-processing to manipulate and customize their influence maps.

For example, Age of Empires [10], uses multi-pass smoothing on influence maps to determine locations on which to construct buildings.

By allowing nodes in my tree to perform such processing, a single IMTree can concisely represent a large variety of spatial reasoning strategies.

An example of an IMTree is shown in Figure 16.

This IMTree is an evolved strategy determining where the player should place buildings.

The first leaf IM is a "near friendly buildings" node, shown in Figure 15, which tells the player to build buildings in the general area of existing ones.

The second leaf IM is a "negative values near friendly buildings" node, shown in Figure 17, which tells the player not to build too close to existing buildings.

The second IM differs from the first in that its radius coefficient is smaller, and its value coefficient is negative, leading it to have high negative values within a smaller radius.

These two nodes are children of a summation branch node, which sums them up to produce the IM shown in Figure 18.

Figure 17:

Negative Values near Friendly Buildings

|

Figure 18:

Near Friendly Buildings + Away Friendly Buildings

|

This IM is then multiplied by two further IMs to produce a resulting IM that represents the complete IMTree.

The first multiplier is a game determined limitation on how far away players can construct their buildings, shown in Figure 19.

The building limiter restricts players to build things near other buildings, and not on top of land.

The second multiplier is an ``away enemy buildings" node, which generally biases the player to build as far from enemies as possible, shown in Figure 20.

Figure 19:

Game Limitation on Building Locations

|

Figure 20:

Away Enemy Buildings

|

Figure 21:

Final Building Placement IM

|

Figure 22:

Final Building Placement IM in A More Complex Situation

|

The final result is the IM shown in Figure 21.

This IMTree leads the player to construct buildings in a rough hexagon grid, which is an efficient pattern.

Note that this IMTree encodes a spatial reasoning strategy that can be applied to any situation, later in the game when more buildings have been constructed the IMTree produces a resultant IM that looks like Figure 22.

The IMAI possesses the following IMTrees, each of which has a specific purpose:

- attack details places to send units to attack

- defend details places to send units to defend

- stage details places to send units to move

- civilian construction details where to construct power generators

- military construction details where to produce factories

Each of these IMTrees reduces to a single influence map, with high

values near promising locations to carry out the IMTree task. The

IMAI then uses a local maxima finding algorithm to convert this IM

into a set of objectives that resources can be allocated to. The

algorithm works by iteratively creating objectives at the highest

point in the influence map that is not within some distance of

other objectives. These objectives receive a raw benefit score

based on the value of the IM at that point, this raw benefit

scores is used by the allocation system described in

Section ![[*]](/usr/share/latex2html/icons/crossref.png) .

.

The IMAI uses three basic types of branch nodes, an operator node

which performs mathematical operators on multiple children, a

gaussian node, that applies a gaussian blur function to a single

child, and a normalization node that normalizes a single child.

The operator nodes expose a single parameter to the encoding,

describing which operation it performs on their children. The

operation can be either:

- Addition, value for a square is the summed values for that square in all children

- Subtraction, the first IM is added with negated versions of other children

- Multiplication, child values are multiplied together

- Division, the first child value is divided by the other children

- Maximization, the highest value out of all child is taken for a square

- Minimization, the lowest value out of all children is taken for a square

Normalization nodes always normalize between 0 and 1, and gaussian nodes expose a single coefficient describing the radius of the gaussian blur.

Figure 23:

My Objectives in Supreme Commander

|

An objective is a task which the player considers beneficial to accomplish.

The IMTree system first creates spatial objectives to determine where to attack, defend, and expand.

The IMAI then creates construction and training objectives for each type of building and unit.

Finally, the IMAI creates resource gathering objectives which determine the value of the various resource types to the player.

Having described the spatial reasoning system, the following sections detail objective chaining and resource gathering.

Objective Chaining

Figure 24:

Objective Chaining

|

Many objectives do not have self determined benefit, instead being beneficial with respect to reaching other objectives.

For example, the benefit associated with capturing points depends on both the spatial location of the point which determines how quickly can it be captured and how easily can it be defended, as well as the overall situation of the player's economy, which determines how badly the player needs the resources that point produces.

Objective chaining works by forming the various objectives into a directed graph with associated objectives passing benefit to each other via objective links.

The IMAI uses three types of links: additive, multiplicative and hypothetical.

Spatial objectives are chained to unit creation objectives through hypothetical links - by performing a hypothetical allocation with an addition unit of the type the objective creates, we can take the gain in benefit as the benefit to associate with the unit creation objective.

For example, if having a scout allowed me to get 15 additional benefit, We would associate 15 benefit with training more scouts.

Unit creation objectives are chained to building construction objectives by simple additive links - if a cruiser is worth 45 points to me and a destroyer is worth 65, then a shipyard, which produces cruisers and destroyers, is worth 110 (unless We already have a shipyard, in which case this is reduced depending on how much the current shipyard is being used).

Resource gathering objectives have additive links to create power generator objectives, and multiplicative links to capture points objectives, which come with a normalized fitness from the spatial system relating to how defensible of a location they are in.

In Figure 24 the acquire oil objective is passing benefit to the capture point objectives, which in turn passes benefit to the create scout objective, as that is the best unit to capture points.

This benefit finally chains on to the create docks objective, leading the player to assign benefit to creating docks proportional to its need for resources - which is very high at the beginning of the game because income is very small.

The objective chaining system propagates benefit from objectives to their prerequisites with the result being a collection of objectives, from places on the map to attack and defend, to construction and unit creation orders.

Each objective has an associated benefit, determined both from its own merit and its relationship to other objectives.

Allocation

Once resources have been identified and objectives have been created, the IMAI searches to finds a good allocation of resources to objectives.

The purpose of allocation is to find the resource to objective allocation that maximizes expected benefit.

To determine this expected benefit, We create resource-objective benefit functions which estimate the benefit the player will receive by allocating some set of resources to an objective.

Most objective types have raw benefit scores associated with them as they are created, this benefit being partially realized by the set of resources allocated to that objective.

Benefit functions return benefit for unfeasible allocations: allocations which cannot achieve the objective still have some estimated benefit - allocating 2/3 of the money required to construct a building returns 2/3 of the raw benefit associated with constructing that building.

Allowing benefit for unfeasible allocations provides a simpler landscape for the allocation search algorithm.

To make sure that final allocations are feasible We also develop feasibility functions, which final allocations are required to pass.

We develop three core benefit functions, for the three categories of objectives: spatial, building construction and unit creation, and resource gathering.

The IMTree system assigns a raw benefit to spatial objectives as they are created, which is realized by allocating units, allocating a group of units close to the goal and powerful enough to overcome potential resistance returns maximal benefit, while allocating a smaller group of poorly matched units that are farther away returns less benefit.

To determine how well matched units are We develop a combat estimation function which estimates the ratio of strengths between two group of units as described in Section ![[*]](/usr/share/latex2html/icons/crossref.png) .

.

Spatial objectives are feasible if at least one unit has been allocated and the ratio of strengths between friendly and enemy units surpasses a certain threshold.

We encode and evolve this parameter to allow the search to coordinate risk-aversion with the player's overall strategy.

Construction and training objectives receive their benefit from the objective chaining system, which propagates it from associated spatial objectives - an attack objectives adding benefit to objectives that train offensive units.

For buildings, this benefit is adjusted by a metric of long term usage calculated based on total usage over the last few minutes of each category of building, additional docks are only constructed if existing ones are running at capacity.

Construction and training objectives are feasible if enough basic resources have been allocated to purchase the unit and if a builder or build-point has been allocated.

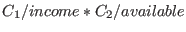

Resource gathering objectives have benefit proportional to

.

.

and

and  are basic real valued coefficients describing the players spending behavior that are encoded and evolved.

The IMAI assigns less priority to stockpiling a resource as the stock or income of that resource grows, leading that resource to be more freely spent.

The IMAI does not directly assign resources to achieve resource gathering objectives, using them instead as proxies in the objective chaining system to pass benefit onto the objectives that produce resources: capturing points and building power generators - Section

are basic real valued coefficients describing the players spending behavior that are encoded and evolved.

The IMAI assigns less priority to stockpiling a resource as the stock or income of that resource grows, leading that resource to be more freely spent.

The IMAI does not directly assign resources to achieve resource gathering objectives, using them instead as proxies in the objective chaining system to pass benefit onto the objectives that produce resources: capturing points and building power generators - Section ![[*]](/usr/share/latex2html/icons/crossref.png) .

The benefit associated with resource gathering objectives is also applied as a cost to allocations of such basic resources, the expected benefit resulting from allocating 1000 resources to Task A is reduced by the value of those 1000 resources.

This gives the IMAI a tendency to maintain a reserve of basic resources until high benefit objectives become available (attacking an enemy weakness, or defending against incoming attacks).

.

The benefit associated with resource gathering objectives is also applied as a cost to allocations of such basic resources, the expected benefit resulting from allocating 1000 resources to Task A is reduced by the value of those 1000 resources.

This gives the IMAI a tendency to maintain a reserve of basic resources until high benefit objectives become available (attacking an enemy weakness, or defending against incoming attacks).

To perform the actual allocation We have developed two techniques: a genetic algorithm, and a greedy search algorithm.

We first developed a genetic algorithm (AllocGA) to do the resource to objective allocation.

AllocGA came from work We did for my masters thesis that used genetic algorithms to do strike force asset allocation within a real-time strategy game [42,43,44,45,46].

Section ![[*]](/usr/share/latex2html/icons/crossref.png) provides an overview of genetic algorithms.

AllocGA is a GA which uses single point crossover, bitwise mutation and roulette wheel selection.

AllocGA used a simple enumeration encoding for the allocation, with each resource receiving a set of bits to encode an integer identifying which resource it was allocated to.

It encodes potential allocations between resources and objectives as individuals in its population.

The fitness for each individual is a summation of the benefits expected from each element in its allocation.

AllocGA was highly effective at finding good allocations, particularity in light of the complexity of some of the allocations.

However, AllocGA suffered from two fundamental issues: it was computationally expensive to run a GA while the game was running, and when rerun it would produce allocations that were similar in overall benefit but different in implementation.

The second issue led to a critical case of indecisiveness as it would frequently redirect boats to other goals, to the point where nothing was accomplished.

For example, the player has two scouts and two points it wants to capture on opposite sides of the map.

The first run through AllocGA sends scout 1 to point A, and scout 2 to point B.

Thirty seconds later when run again, it redirects scout 1 to point B, and scout 2 to point A.

It repeats this process indefinitely, with neither point ever being captured because the scouts are constantly turning around.

To solve this problem We developed a greedy algorithm to perform the allocation.

The greedy algorithm works by scanning all possible resource and objective pairs and taking the one which provides the most benefit.

It then repeats on the remaining resources and objectives so long as its continues to increase the sum benefit of the complete allocation.

The greedy allocator was significantly faster and more repeatable then the GA, but was not as good at finding good allocations in the face of more complicated situations, such as when it takes a particular mix of units to effectively assault an enemy fortification.

provides an overview of genetic algorithms.

AllocGA is a GA which uses single point crossover, bitwise mutation and roulette wheel selection.

AllocGA used a simple enumeration encoding for the allocation, with each resource receiving a set of bits to encode an integer identifying which resource it was allocated to.

It encodes potential allocations between resources and objectives as individuals in its population.

The fitness for each individual is a summation of the benefits expected from each element in its allocation.

AllocGA was highly effective at finding good allocations, particularity in light of the complexity of some of the allocations.

However, AllocGA suffered from two fundamental issues: it was computationally expensive to run a GA while the game was running, and when rerun it would produce allocations that were similar in overall benefit but different in implementation.

The second issue led to a critical case of indecisiveness as it would frequently redirect boats to other goals, to the point where nothing was accomplished.

For example, the player has two scouts and two points it wants to capture on opposite sides of the map.

The first run through AllocGA sends scout 1 to point A, and scout 2 to point B.

Thirty seconds later when run again, it redirects scout 1 to point B, and scout 2 to point A.

It repeats this process indefinitely, with neither point ever being captured because the scouts are constantly turning around.

To solve this problem We developed a greedy algorithm to perform the allocation.

The greedy algorithm works by scanning all possible resource and objective pairs and taking the one which provides the most benefit.

It then repeats on the remaining resources and objectives so long as its continues to increase the sum benefit of the complete allocation.

The greedy allocator was significantly faster and more repeatable then the GA, but was not as good at finding good allocations in the face of more complicated situations, such as when it takes a particular mix of units to effectively assault an enemy fortification.

Combat Estimation

We develop a combat estimation system that the IMAI uses to estimate the benefit it will receive from sending its forces to engage enemy units.

This is an non-trivial and important task for RTS game players to do which requires significant experience for humans to learn.

This is also an area where commercial RTS AI is traditionally weak, forming one of the most effective ways for humans to manipulate artificial opponents.

To do this correctly We need a function that properly estimates the outcome of combat between any two collections of units.

We first outline several desired properties for this function:

- More

Less, 5 Riflemen should defeat 4 Riflemen

Less, 5 Riflemen should defeat 4 Riflemen

- Environment - Skirmishers in broken terrain defeat Cavalry but Cavalry in open terrain defeat Skirmishers.

- Mixed Forces - 5 Riflemen, 3 Anti Tank Guns, 3 Tanks are better then 8 Riflemen, 6 Tanks

- Heavy weapons - 1 tank defeats 100 riflemen, i.e. some units cannot by defeated purely through numbers

- Economies of scale - 1 zealot defeats 4 marines, but 40 marines defeat 10 zealots

- Group Dynamics - Unit types C and D are weak individually, but powerful when used together

This is a complex problem - units have a wide variety of abilities and skills that cannot be distilled to a single number.

Units have many interactions both with supporting units, enemy abilities, and the nature of the terrain itself.

Because of this a perfect estimation of effectiveness would be extremely difficult to abstract out of most RTS games.

Most commercial RTS AI reduces each unit to a single strength value, incorporates some basic bonuses / penalties based on paper-rock-scissors dynamics, and then compares the summed strengths of both groups of units.

The systems successfully achieve the first two properties, but leave significant room for improvement with the last four issues.

We developed a novel combat estimation technique inspired by game-theory.

We define the output of the function as a single floating point value between 0 and 1 relating the ratio of strengths between the two groups.

A score of 1 means that my forces should completely destroy the enemy with virtually no casualties, while a score of 0 is total defeat, and a .5 is an even fight.

We ignore the concepts of terrain and economies of scale, as neither of those are fundamentally important in LagoonCraft.

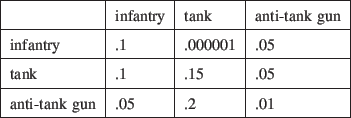

We create a unit-effectiveness matrix describing how quickly a unit can kill any other opposing unit under ideal conditions, an example of which is shown in Figure 25.

Figure 25:

Example Unit Effectiveness Matrix

|

In my example matrix, for example, the infantry unit can destroy .1 other infantry units within some arbitrary time unit.

So in 10 time units a squad of infantry can completely destroy an enemy squad of infantry, while taking virtually forever to destroy an enemy tank.

Figure 26:

Unit Mixed Strategies

|

For each unit on both sides We calculate a mixed strategy for how they will distribute their offensive capabilities.

We assume that units can perfectly distribute their fire in whichever way they want.

Units distribute their offensive capabilities against the set of enemy units proportional to their unit effectiveness against that unit divided by their sum effectiveness against all enemy units, as in Equation 2.

|

(2) |

Were  an

an  are units from sides

are units from sides  and

and  respectively.

respectively.

is the percentage of

is the percentage of  's attacks that are going to

's attacks that are going to  and

and  is the unit effectiveness score describing how much damage

is the unit effectiveness score describing how much damage  can do to

can do to  .

In Figure 26 for example, the riflemen on the left are distributing their fire evenly against the riflemen on the right, because they have very little effectiveness against the tank.

The tank on the right is effective against most unit types, so it is distributing its fire fairly evenly.

The anti-tank guns on the left are primarily useful against enemy armor, so they are concentrating on the enemy tanks.

.

In Figure 26 for example, the riflemen on the left are distributing their fire evenly against the riflemen on the right, because they have very little effectiveness against the tank.

The tank on the right is effective against most unit types, so it is distributing its fire fairly evenly.

The anti-tank guns on the left are primarily useful against enemy armor, so they are concentrating on the enemy tanks.

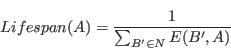

Once the mixed strategies for each unit have been calculated, we can determine total lifespan for each unit, per Equation 3.

|

(3) |

For our example this produces the lifespans shown in Figure 27.

Figure 27:

Unit Lifespans

|

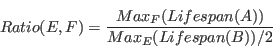

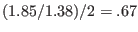

We then calculate the ratio of strength between the two forces as:

|

(4) |

The longest surviving unit on the left is the Tank, which survives 1.85 time units, compared to the tanks on the right which survive 1.38 time units.

This gives a strength ratio of

.

Our estimation system is giving the left group of units a moderate advantage.

While the actual outcome of the fight would depend upon many factors, my estimation system does a significantly better job of dealing with the important dynamics of the problem that the ``sum and compare" systems present in most RTS games.

Expanding my force composition system to deal with terrain could be done by having various unit-effectiveness matrix's for each type of terrain.

Dealing with economies of scale and group dynamics would be very interesting avenues for future research.

.

Our estimation system is giving the left group of units a moderate advantage.

While the actual outcome of the fight would depend upon many factors, my estimation system does a significantly better job of dealing with the important dynamics of the problem that the ``sum and compare" systems present in most RTS games.

Expanding my force composition system to deal with terrain could be done by having various unit-effectiveness matrix's for each type of terrain.

Dealing with economies of scale and group dynamics would be very interesting avenues for future research.

These systems combine together to form the IMAI, which can represent and play robust RTS strategies.

Instead of hand-tuning these strategies, which is time-consuming and error-prone, We use automated search techniques to generate them.

Specifically, We use a co-evolutionary genetic algorithm, as described in the next chapter.

With the IMAI providing a representation of game-playing strategies, I

can investigate methods for generating effective strategies. We develop

a Co-evolutionary Queue based Genetic Algorithm (CoQuGa) to perform

this search. Searching to find good strategies instead of hand-coding

them reduces the cost in expert knowledge and allows for the

development of more complex players. RTS games are designed to have a

non-linear search space of complex strategies, making it difficult to

apply many traditional search techniques. We use genetic algorithms

because they have been historically effective at solving difficult

search problems [36].

CoQuGa maintains a population of individuals, each of which in turn

encodes a strategy for the IMAI to use. We evaluate these Individuals

within CoQuGa by playing them against other members of the population,

making CoQuGa a co-evolutionary system. CoQuGa is a steady state GA -

frequently creating and destroying a handful of individuals instead of

occasionally replacing the entire population. Evaluating individuals

requires running the game, which is computationally expensive. To

overcome this we use a master-slave distributed model that distributes

evaluations over a network of computers, shown in Figure 6.1. Within

the master-slave model, the master maintains the population and

applies genetic operators while the slaves perform the

This demo file is intended to serve as a ``starter file''

for IEEE journal papers produced under LATEX using

IEEEtran.cls version 1.7 and later.

Wish you the best of success.

mds

January 11, 2007

Subsection text here.

Subsubsection text here.

The conclusion goes here.

Appendix one text goes here.

Appendix two text goes here.

The authors would like to thank...

- url@samestyle

- 1

-

=2plus

4 4 solutions for complex tasks,'' in Proceedings of the 5th International

Conference on Genetic Algorithms (GA-93), 1993, pp. 264-270. [Online].

Available: citeseer.ist.psu.edu/angeline93competitive.html=0pt

- 2

-

D. B. Fogel, Blondie24: Playing at the Edge of AI.Morgan Kauffman, 2001.

- 3

-

A. L. Samuel, ``Some studies in machine learning using the game of checkers,''

IBM Journal of Research and Development, vol. 3, pp. 210-229, 1959.

- 4

-

J. B. Pollack, A. D. Blair, and M. Land, ``Coevolution of a backgammon

player,'' in Artificial Life V: Proc.the Fifth Int. Workshop on the Synthesis and Simulation of Living Systems, C. G.

Langton and K. Shimohara, Eds.

Cambridge, MA: The MIT Press, 1997, pp. 92-98.

- 5

-

G. Tesauro, ``Temporal difference learning and td-gammon,''

Communications of the ACM, vol. 38, no. 3, 1995.

- 6

-

=2plus

4 4 www.blizzard.com/starcraft=0pt

- 7

-

R. E. Inc., ``Dawn of war,'' 2005, http://www.dawnofwargame.com.

- 8

-

G. P. Games, ``Supreme commander, http://www.supremecommander.com,'' 2007.

- 9

-

R. E. Inc., ``Company of heroes,'' 2006, http://www.companyofheroesgame.com/.

- 10

-

=2plus

4 4 Available: www.ageofempires3.com=0pt

- 11

-

A. L. Zobrist, ``A model of visual organization for the game of go,'' in

AFIPS Conf. Proc., 1969, pp. 34, 103-112.

- 12

-

C. Miles and S. J. Louis, ``Co-evolving real-time strategy game playing

influence map trees with genetic algorithms,'' in Proceedings of the

International Congress on Evolutionary Computation, Portland, Oregon.IEEE Press, 2006.

- 13

-

---, ``Towards the co-evolution of influence map tree based strategy game

players,'' in Proceedings of the 2006 IEEE Symposium on Computational

Intelligence in Games.IEEE Press,

2006, pp. 75-82.

- 14

-

---, ``Co-evolving influence map tree based strategy game players,'' in

Proceedings of the 2007 IEEE Symposium on Computational Intelligence in

Games.IEEE Press, 2007, p. Pages: to

appear.

- 15

-

=2plus

4 4 www.blizzard.com/war2bne=0pt

- 16

-

A. Barzel, ``The perplexing conclusion: The essential difference between

natural and artificial intelligence is human beings' ability to deceive,''

Journal of Applied Philosophy 15 (2), pp. 165-178, 1998.

- 17

-

B. L. and W. L., ``Evolving adaptive play for simplified poker,'' in

Proceedings of the IEEE International Conference on Computational

Intelligence.IEEE press, 1998, pp.

108-113.

- 18

-

---, ``An adaptive learning model for simplified poker using evolutionary

algorithms,'' in Proceedings of the IEEE Congress of Evolutionary

Computation.IEEE press, 1999, pp.

153-160.

- 19

-

---, ``Adaptive learning for poker,'' in Proceedings of the Genetic

and Evolutionary Computation Conference, 2000, pp. 566-573.

- 20

-

B. D., P. D., S. J., and S. D., ``Poker as a testbed for machine intelligence

research,'' pp. 1-15, 1998.

- 21

-

---, ``Opponent modeling in poker,'' in Proceedings of the 15th

National Conference of the American Association for Artificial Intelligence,

1998, pp. 493-499.

- 22

-

---, ``Using probabilistic knowledge and simulation to play poker,'' in

Proceedings of the 16th National Conference of the American Association

for Artificial Intelligence, 1999, pp. 697-703.

- 23

-

F. N., ``Studies in machine cognition using the game of poker,'' in CACM

20(4), 1977, pp. 230-245.

- 24

-

K. G. and W. M., ``An investigation of an adaptive poker player,'' in

Proceedings of 14th Australian Joint Conference on Artificial

Intelligence.Springer-Verlag, 2001,

pp. 189-200.

- 25

-

S. J., B. D., P. L., and S. D., ``Learning to play strong poker,'' in

Proceedings of the Sixteenth International Conference on Machine

Learning, 1999.

- 26

-

von Neumann J. and M. O, Theory of Games and Economic Behavior.Princeton University Press, 1944.

- 27

-

J. Holland, Adaptation in Natural and Artificial Systems.University of Michigan Press, 1975.

- 28

-

D. Goldberg and J. Richardson, ``Genetic algorithms with sharing for multimodal

function optimization,'' in Proceedings of the 2nd International

Conference Genetic Algorithms.j. J.

Grefensette, Ed. Hillsdale, NJ: Lawrence Erlbaum, 1987, pp. 41-49.

- 29

-

K. A. DeJong, ``An analysis of the behavior of a class of genetic adaptive

systems,'' 1975.

- 30

-

C. D. Rosin and R. K. Belew, ``Methods for competitive co-evolution: Finding

opponents worth beating,'' in Proceedings of the Sixth International

Conference on Genetic Algorithms, L. Eshelman, Ed.San Francisco, CA: Morgan Kaufmann, 1995, pp. 373-380.

- 31

-

=2plus

4 4 Australian Joint Conference on Artificial Intelligence, 2001, pp.

189-200. [Online]. Available:

citeseer.nj.new.com/kendall01investgation.html=0pt

- 32

-

=2plus

4 4 Available: www.cavedog.com/totala=0pt

- 33

-

R. E. Inc., ``Homeworld,'' 1999, homeworld.sierra.com/hw.

- 34

-

J. E. Laird, ``Research in human-level ai using computer games,''

Communications of the ACM, vol. 45, no. 1, pp. 32-35, 2002.

- 35

-

J. E. Laird and M. van Lent, ``The role of ai in computer game genres,'' 2000,

http://ai.eecs.umich.edu/people/laird/papers/book-chapter.htm.

- 36

-

---, ``Human-level ai's killer application: Interactive computer games,''

2000, http://ai.eecs.umich.edu/people/laird/papers/AAAI-00.pdf.

- 37

-

P. S. Rosenbloom, J. E. Laird, and A. Newell, The Soar Papers.The MIT Press, 1993.

- 38

-

M. Buro, ``Orts,'' 2007, http://www.cs.ualberta.ca/ mburo/orts/.

- 39

-

B. N. J. Renz, ``Qualitative spatial reasoning using constraint calculi,''

M. Aiello, I. Pratt-Hartmann, J. van Benhtem (eds).: The Logic of

Space, 2006.

- 40

-

J. Koza, Genetic Programming: On the Programming of Computers by Means of

Natural Selection.MIT Press, 1992.

- 41

-

L. Meckes, ``goblob,'' 2007, http://canutkiin.jeudego.org/go_blob/.

- 42

-