|

We have performed a number

of experiments and comparisons in order to

demonstrate the performance of the proposed gender classification approach.

First, each classifier was tested using manually selected eigen-features. We run several experiments

varying the number of eigenvectors from 10 to 150. The averaged performances are summarized in Table 1.

In the next set of our experiments, we used GAs to select optimum

subsets of eigenvectors for gender classification. The GA

parameters we used in all experiments are as follows: population

size: 350, number of generations: 400, crossover rate: 0.66 and

mutation rate: 0.04. It should be noted that in every case, the GA

converged to the final solution much earlier (i.e., after 150

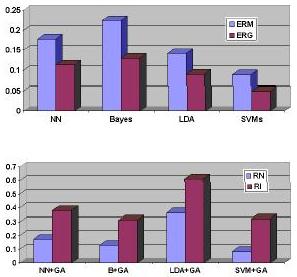

generations). Fig. 2 shows the average error rate obtained in

these runs. The results illustrate clearly that the feature

subsets selected by the GA have reduced the error rate of all the

classifiers significantly.

Fig.2. (Top): Error rates of various classifiers using

features subsets selected manually or by GAs. ERM: the error rate

using the manually selected feature subsets; ERG: error rate using

GA selected feature subsets. (Bottom): A comparison between

the automatically selected feature subsets and the complete

feature set. RN: the ratio between the number of features in the

GA-selected feature subsets and the complete feature set; RI: the

percentage of the information contained in the GA-selected feature

subset

|

As we have discussed before, different

eigenvectors seem to encode different kinds of information. For

visualization purposes, we have reconstructed the facial images

using the selected eigenvectors only (Fig. 3). Several

interesting comments can be made through observing the

reconstructed images using feature subsets selected by GAs.

First of all, it is obvious that face

recognition can not be performed based on the reconstructed faces

using only the eigenvectors selected by the GA - they all look

fairly similar to each other. In contrast, the reconstructed faces

using the best eigenvectors (i.e., principal components) do reveal

identity as can be seen from the images in the second row. The

reconstructed images from eigenvectors selected by the GA,

however, do disclose strong gender information - the

reconstructed female faces look more "female" than the

reconstructed male faces. This implies that the GA did select out

eigenvectors that seem to encode gender information. Second, those

eigenvectors encoding features unimportant for gender

classification seem to have been discarded by the GA. This is

obvious from the reconstructed face images corresponding to the

first two males shown in Fig. 3.. Although both of them

wear glasses, the reconstructed faces do not contain glasses which

implies that the eigenvectors encoding glasses have not been

selected by the GA. Note that the reconstructed images using the

first 30 most important eigenvectors (second row) preserve

features irrelevant to gender classification (e.g., glasses).

Fig.3.Reconstructed images using the selected feature subsets.

First row: original images; Second row: using top 30 eigenvectors;

Third row: using the eigenvectors selected by Bayes-PCA+GA; Fourth

row: using the eigenvectors selected by NN-PCA+GA; Fifth row:

using the eigenvectors selected by LDA-PCA+GA; Sixth row: using

the eigenvectors selected by SVM-PCA+GA.

|

|