Research

Computer Vision: Background Modeling, Object Recognition, Classification and Tracking

Background Modeling and Foreground Detection

Detecting regions of interest in video sequences is one of the most important tasks of most high level video processing applications. In this work a novel approach based on Support Vector Data Description (SVDD) is presented which detects foreground regions in videos with quasi-stationary backgrounds. The SVDD is a technique used in analytically describing the data from a set of population samples. The training of Support Vector Machines (SVM's) in general, and SVDD in particular requires a Lagrange optimization which is computationally intensive. We propose to use a genetic approach to solve the Lagrange optimization problem more efficiently. The Genetic Algorithm (GA) starts with an initial guess and solves the optimization problem iteratively. We expect to get accurate results, moreover, with less cost than the traditional Sequential Minimal Optimization (SMO) technique.

-

Alireza Tavakkoli, Amol Ambardekar, Mircea Nicolescu, Sushil Louis, "A Genetic Approach to Training Support Vector Data Descriptors for Background Modeling in Video Data", the Proceedings of the 3rd International Symposium on Visual Computing, Lake Tahoe, Nevada, November 2007. (pdf)

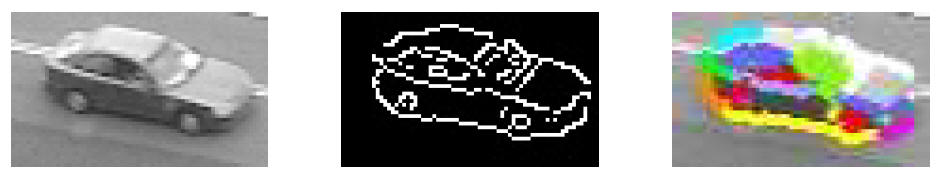

Automated Traffic Surveillance System

We propose a new traffic surveillance system that works without prior, explicit camera calibration, and has the ability to perform surveillance tasks in real time. Camera intrinsic parameters and its position with respect to the ground plane are derived using geometric primitives common to any traffic scene. We use optical flow and knowledge of camera parameters to detect the pose of a vehicle in the 3D world. This information is employed by a model-based vehicle detection and classification technique used by our traffic surveillance application. The object (vehicle) classification uses two new techniques - color contour-based matching and gradient-based matching. Our experiments on several real traffic video sequences demonstrate good results for our foreground object detection, tracking, vehicle detection and vehicle speed estimation approaches.

-

Amol Ambardekar, Mircea Nicolescu, and George Bebis, “Efficient Vehicle Tracking and Classification for an Automated Traffic Surveillance System,” the Proceedings of Signal and Image Processing, Kailua-Kona, Hawaii, August 2008. (pdf)

Piecewise Feature Clustering for Object (Vehicle) Tracking

Object tracking is a complex, yet essential task to be addressed in any video surveillance application. Many real-time techniques proposed in the literature rely on a frame-to-frame matching of objects. In this work, a technique which takes into consideration the inherent temporal coherence that exists across frames, thus being able to robustly perform tracking while handling difficult situations such as object acceleration and partial occlusion. SIFT (Scale Invariant Feature Transform) approaches have been shown to perform well for object recognition, due to their robustness to noise, changes in illumination and viewpoint. In this work we propose to use a SIFT-based method for tracking image features across frames. Tracked SIFT features provide the displacement of each interest point in the image, which along with image coordinates and frame number constitute a feature vector. All feature vectors are added to a temporal buffer and clustered in order to identify and track coherently moving regions. The proposed clustering method uses an improved K-Means technique where K is determined using a CI (Confidence Interval) metric. We demonstrate our method in the context of a real-time traffic surveillance application.

-

Amol Ambardekar, Mircea Nicolescu, and Monica Nicolescu, “Object Tracking Using Piecewise Feature Clustering,” theProceedings of Visualization, Imaging and Image Processing,Cambridge, UK, July 2009. (pdf)

Object (Vehicle) Classification

Video surveillance has significant application prospects such as security, law enforcement, and traffic monitoring. Visual traffic surveillance using computer vision techniques can be non-invasive, cost effective and automated. Detecting and recognizing the objects in a video is an important part of many video surveillance systems which can help in tracking of the detected objects and gathering important information. In case of traffic video surveillance, vehicle detection and classification is important as it can help in traffic control and gathering of traffic statistics that can be used in intelligent transportation systems. Vehicle classification poses a difficult problem as vehicles have high intra class variation and relatively low inter class variation. In this work, we investigate five different object recognition techniques: PCA+DFVS, PCA+DIVS, PCA+SVM, LDA, and constellation based modeling applied to the problem of vehicle classification. We also compare them with the state-of-the-art techniques in vehicle classification. In case of the PCA based approaches, we extend face detection using a PCA approach for the problem of vehicle classification to carry out multiclass classification. We also introduce a variation of constellation model-based approach using the dense representation of SIFT features. We consider three classes: sedans, vans and taxis and record classification accuracy as high as 99.25% in case of cars vs vans and 97.57% in case of sedans vs taxis. We also present a fusion approach that uses both PCA+DFVS and PCA+DIVS and achieves classification accuracy of 96.42% in case of sedans vs vans vs taxis.

-

Amol Ambardekar, Mircea Nicolescu, George Bebis, and Monica Nicolescu, "Vehicle Classification Framework: A Comparative Study," (under review)

Computer Vision and Human Computer Interaction

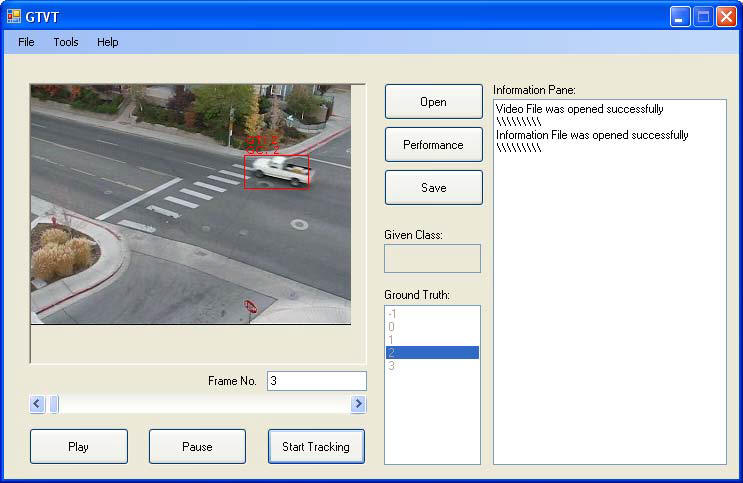

Ground Truth Verification Tool

Different approaches have been proposed by computer scientists to solve the difficult problem of content recognition from video data. They use many different videos to prove their usefulness and accuracy. A careful comparison and evaluation needs to be done to find the most suitable method under given conditions. To compare the results given by video surveillance applications, the ground truth needs to be established. In the case of computer vision, the ground truth needs to be provided by humans, making it one of the most time-consuming tasks in the evaluation process. This paper presents a tool (GTVT) that allows the user to establish the ground truth for a given video. GTVT presents a user-friendly interface to perform the cumbersome task of ground truth establishment and verification.

-

Amol Ambardekar, Mircea Nicolescu, and Sergiu Dascalu, “Ground Truth Verification Tool (GTVT) for Video Surveillance Systems,” the Proceedings of Advances in Computer Human Interactions, Cancun, Mexico, February 2009. (pdf)

Computer Vision and Robotics

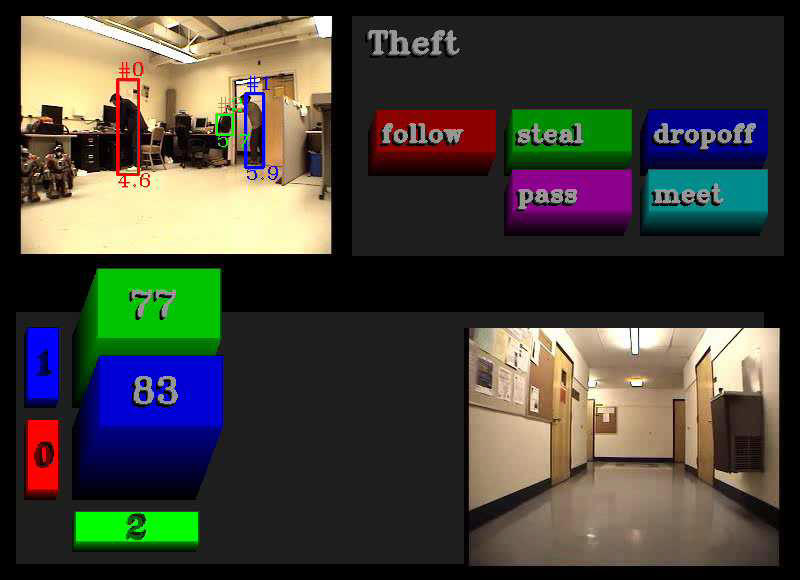

Point Clouds and Range Images for Intent Recognition

The wide availability of inexpensive RGB-D cameras such as Microsoft’s Kinect presents an opportunity to significantly improve the sensing capabilities required for human-robot interaction. In this paper we present an intent recognition system that demonstrates the potential of RGB-D cameras for systems that must interpret human activities. We also discuss systems we are currently developing and touch on techniques we are using to make such systems scalable to significantly larger data sets.

-

Richard Kelley, Amol Ambardekar, Liesl Wigand, Monica Nicolescu, and Mircea Nicolescu, "Point Clouds and Range Images for Intent Recognition and Human-Robot Interaction," Proceedings of 2nd Workshop on RGB-D: Advanced Reasoning with Depth Cameras, June 2011. (pdf)

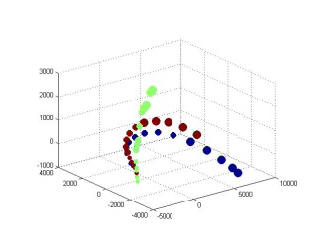

A Developmental Framework for Visual Learning in Robotics

In this work we are investigating a developmental learning strategy for robotic applications. Two approaches based on Isomap dimensionality reduction and on feature-based learning are investigated. In the training phase, the robot is presented with objects (e.g. squares, circles) and labels regarding their properties (e.g. shape, size, orientation). From these the system learns a representation of each of these properties (concepts) and is then able to recognize them in new images, previously unseen. Based on our experiments, we have concluded that in the Isomap space, given enough samples of objects and their properties, each dimension represents one or a combination of the object properties. The feature-based approach uses a pre-processing technique to extract predefined features from an object image. Our main investigation is concentrated in the Isomap space and related to finding an automated inference mechanism for learning object properties (concepts).

-

Amol Ambardekar, Alireza Tavakkoli, Mircea Nicolescu, Monica Nicolescu, "A Developmental Framework for Visual Learning in Robotics," Proceedings of the International Conference on Image Processing, Computer Vision and Pattern Recognition, Las Vegas, Nevada, July 2010. (pdf)

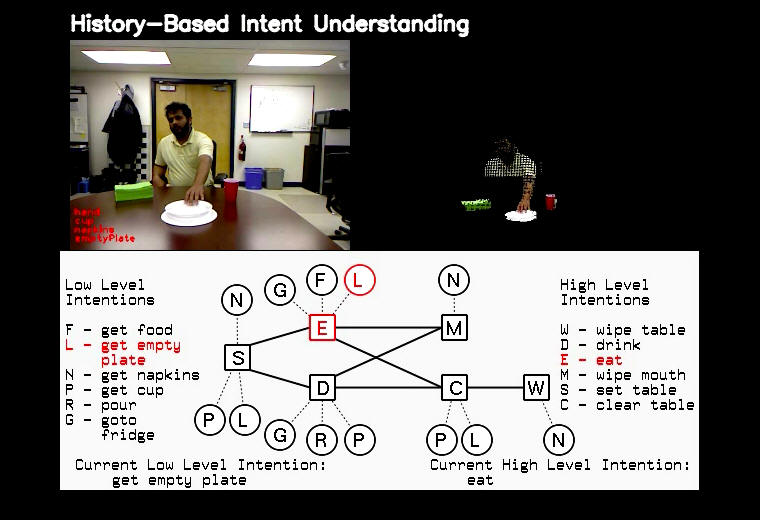

Integrating Context into Intent Recognition Systems

A precursor to social interaction is social understanding. Every day,

humans observe each other and on the basis of their observations “read

people’s minds,” correctly inferring the goals and intentions of others.

Moreover, this ability is regarded not as remarkable, but as entirely

ordinary and effortless. If we hope to build robots that are similarly

capable of successfully interacting with people in a social setting, we

must endow our robots with an ability to understand humans’ intentions.

In this paper, we propose a system aimed at developing those abilities

in a way that exploits both an understanding of actions and the context

within which those

actions occur.

-

Richard Kelley, Alireza Tavakkoli, Chris King, Amol Ambardekar, Mircea Nicolescu, and Monica Nicolescu, "Integrating Context into Intent Recognition Systems,"the Proceedings of 7th International Conference on Informatics in Control, Automation and Robotics, Madeira, Portugal, June 2010. (pdf)

Biometrics

Signature Recognition, Palmprint Recognition and Keystroke Dynamics Analysis

Handwritten signatures are one of the oldest biometric traits for human

authorization and authentication of documents. Majority of commercial

application area deal with static form of signature. In this work we

present a method for off-line signature recognition. We have used

morphological dilation on signature template for measurement of the

pixel variance and hence the inter class and intra class variations in

the signature.

Palmprints are rich in texture information which can be used

classification purpose. Wavelets are very good in extracting localized

texture information. In this paper a new and faster type of wavelets

called kekre’s wavelets are used for extracting feature vector from

palmprints. Multilevel decomposition is performed and feature vectors

are matched using Euclidian distance and Relative Energy Entropy.

Keystroke Dynamics is one of the important behavior based biometric

trait. It has moderate uniqueness level and low user cooperation is

required. In this work keystroke dynamics analysis using relative

entropy and Euclidian distance between keystroke timing sequence is

performed.

-

H. B. Kekre, V. A. Bharadi, S. Gupta, A. A. Ambardekar, V. B. Kulkarni, "Off-line signature recognition using morphological pixel variance analysis", Proceedings of the ACM International Conference and Workshop on Emerging Trends in Technology, pp. 3-10, February 2010. (pdf)

-

H. B. Kekre, V. A. Bharadi, V. I. Singh, and A. A. Ambardekar, "Palmprint recognition using Kekre's wavelet's energy entropy based feature vector", Proceedings of the ACM International Conference & Workshop on Emerging Trends in Technology, pp. 220-223, February 2011. (pdf)

-

H. B. Kekre, V. A. Bharadi, P. Shaktia, V. Shah, and A. A. Ambardekar, "Keystroke dynamic analysis using relative entropy & timing sequence Euclidian distance", Proceedings of the ACM International Conference & Workshop on Emerging Trends in Technology, pp. 39-45, February 2011. (pdf)

Applied Physics

Pulsed Optical Tweezers

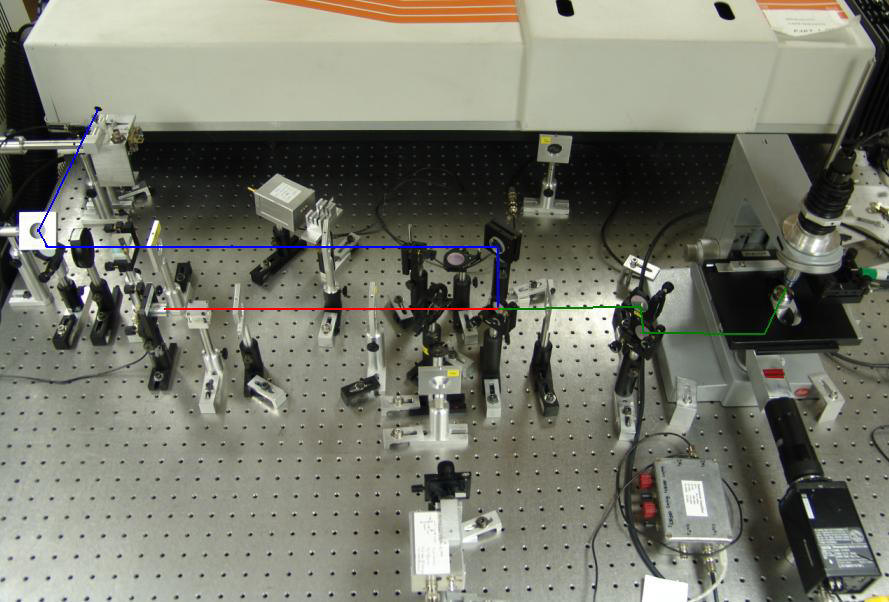

We developed a pulsed laser tweezers and used it successfully for levitation and manipulation of microscopic particles stuck on a glass surface. A pulsed laser tweezers contains all the characteristics of a CW laser tweezers with addition of a pulsed laser for levitation of stuck microparticles. An infrared pulse laser at 1.06 μm was used to generate a large gradient force (up to 10-9 N) within a short duration (~45 μs) that overcomes the adhesive interaction between the stuck particles and the surface; and then a low-power continuous-wave diode laser at 785 nm was used to capture and manipulate the levitated particle. We demonstrated that both the stuck dielectric and biological micron-sized particles can be levitated and manipulated with this technique, including polystyrene beads, yeast cells, and bacillus cereus bacteria. We also measured the single pulse levitation efficiency for polystyrene beads as the functions of the pulse energy, the axial displacement from the stuck particle to the pulsed laser focus, and the percentage NaCl present in the sample. The results showed that the levitation efficiency was as high as 88% for 2.0 μm polystyrene spheres.

-

Amol Ambardekar and Yong-qing Li, “Optical levitation and manipulation of stuck particles with pulsed optical tweezers,” Optics Letters, 30(14), 2005. (pdf)

-

Amol Ambardekar and Yong-qing Li, “Pulsed optical tweezers for levitation and manipulation of stuck biological particles,” the Proceedings of Conference on Lasers and Electro-Optics, Baltimore, Maryland, USA, May 2005. (pdf)